During GDC 2024 in San Francisco, we hosted the Revenue Optimization in Games Mini-Summit. Industry leaders gave four fascinating presentations about revenue optimization in gaming.

See our Reporting from the Game Revenue Optimization Mini-Summit follow-up post to learn more!

Recently, I went on the Game Economist Podcast (I’m on episode 19). It was a great conversation, but I was slightly surprised at how much it focused on my former company, Scientific Revenue, and the differences between Scientific Revenue and Game Data Pros (where I am the founding CEO).

In retrospect, I shouldn’t have been. “How is Game Data Pros different from Scientific Revenue?” is a question I get asked at least twice a week. Given that both companies were founded by the same people and are data-oriented companies working in gaming, it’s a fair question.

The two companies are very different:

- Game Data Pros is a consulting company focused on optimizing games (and other forms of digital entertainment). We provide software and analytical systems that help people at game companies make decisions that will improve their games. Most of the time, that improvement is measured using one of the standard metrics (retention, LTV, acquisition cost, … ), and the focus is often on the player’s journey as they play multiple games.

- Scientific Revenue was a machine learning startup that provided automated dynamic pricing to mobile games. It shipped an SDK for data collection, stored massive data sets in its data center, and then analyzed those data sets to improve pricing strategies. The goal of Scientific Revenue was to automatically change the prices a player sees in-game and thereby increase short-term revenue.

But it’s also true that Game Data Pros is strongly influenced by the lessons learned at Scientific Revenue. Here are 5 of the most important.

1. Optimization is Hard. Really Really Hard.

You’d think this would be obvious. Amazon has hundreds of texts on mathematical optimization, and the math involved can be ferocious. But it can be very seductive to say: let’s have domain experts propose alternative sets of prices and then use an experimentation framework to evaluate them (this can be as simple as AB testing or something more complex, like multi-arm bandits)

Even then, it’s still not easy. You have to worry about spillover effects (what if players talk to each other), consumer behavior (what if people start to wait for sales instead of buying on a regular basis), the possibility of consumer reaction (see the reaction to a pricing experiment run by Zynga for a great example of what happens if you don’t think about community reaction when you do an AB test), and so on.

(The experienced gaming data scientists have already sadly noted, “he’s assuming the data coming from the game is correct.” No, I’m not. But that’s a whole other lesson learned. See below).

And that’s just for price optimization. If you want to think about a more general optimization framework that takes into account other parametrized game configurations and then tries to optimize the game, the problem is much bigger and orders of magnitude more difficult (for example, in any calculus, something like Julian Runge’s framework for engagement engineering is an essential component).

One very interesting aspect of this is that the scale of the problem and the number of people required to address it seem obvious when you sit down and sketch it out. But if you go to a game company with a title that’s doing well and ask them, “How many data scientists are working on optimizing this game,” the answer is usually somewhere between 3 and 10.

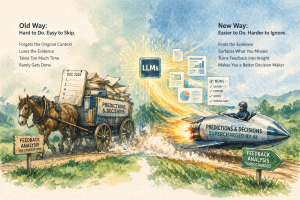

2. The World Doesn’t Want Automation. Not Really.

There was this proof of concept we did at Scientific Revenue. We were working with a major gaming company. We integrated our pricing engine into one of their mobile games, set things up, and automated some of the pricing decisions. The result was a double-digit increase in in-game revenue.

And yet, the customer decided not to move forward. Why? I’m writing this years later, from memory, but the explanation went something like, “We realized how uncomfortable we were with outsourcing pricing decisions to a third party. And how much more uncomfortable we were knowing that it was an automated process controlled by a third party.”

Simply put, companies want control regarding something as crucial as making pricing decisions.

I mentioned this over coffee in 2019 to Tom Lounibos, an EIR at Accenture at the time. And he pointed out that this was a central insight in the book “Human + Machine” by Paul Daugherty, the CTO of Accenture. The human need to be in control means full automation is almost always a bad product decision.

3. Dynamic Pricing of In-Game Goods is Only Part of the Puzzle and Not the Most Important Part

Dynamic pricing is an incredibly attractive technique for three fundamental reasons: it’s simple, it’s easily measured, and people have been doing it for centuries.

- It’s simple because you’re only changing the prices of goods and services (and possibly adding special offers or a subscription). It’s not a gameplay change and, aside from the in-game shop, there isn’t a lot of code to change.

- It’s easy to measure because there’s generally a consensus that if you spot-check revenue at 3 points (D3, D14, and D30) with a guardrail metric around retention, you can feel confident that you’ve made a good change.

- Because people have been doing it for centuries, it has a well-established vocabulary and a vast body of knowledge. If someone says, “Have we tried price skimming on our targeted offers,” you can google and easily find any number of great explanations.

However, the industry consensus is that various other focus areas matter more. For example, most game monetization experts argue that “getting retention right” is far more important for overall game revenue (see, for example, Will Luton’s writeup at the Department of Play).

You’ll also hear people say that presentation matters more, that lowering the cost of user acquisition is the most important thing, or that the ability to cross-promote your other games to players who are about to churn is the highest value thing. Or that being able to tune in-game messaging has the highest ROI. Or that well-managed events are the single most important tool for revenue optimization. Or that ….

What rapidly becomes clear is that a comprehensive data-oriented revenue optimization strategy requires many touchpoints, both in and outside the game, and a robust framework for manipulation and causal attribution.

4. Game Company Data is Often Unreliable, but Data-Capture SDKs are Fatal to the Sales Process

Games are among the most data-centric applications on the planet. A game telemetry system often has hundreds or thousands of distinct events that measure what the players are doing and where they’re doing it. In the abstract, it’s awesome.

However, game data sets are usually permeated with subtle bugs, assumptions, and gaps.

At Scientific Revenue, we built an SDK for game data collection that collected the information we needed. That decision involved a vast number of tradeoffs. On the positive side, we didn’t have to learn a new database schema for each customer — we could rely on the events captured by our SDK. And when we compared our measurements to our customer’s data (while we were calibrating the data models), we routinely saw a 10 to 20% difference in key metrics. Which validated the decision to do the data collection ourselves.

On the negative side, most game companies don’t want to integrate third-party code into their game. And, increasingly, compliance requirements mean they can’t ship data to a third-party data center in any case.

We never actually solved this at Scientific Revenue. We had an SDK, and our insistence that customers use it was an endless frustration to our sales team. Our best estimate was that our insistence on using an SDK immediately eliminated 30% of our customer pipeline. And even when companies were willing to integrate the SDK, the time required to integrate the SDK (the time it took engineering to get around to integrating the SDK in the face of other priorities) killed even more deals.

At GDP, we’ve invested in a Data Archaeology team — their job is to deeply understand customer data sets and help us do feature engineering. That is, they first understand the customer data and structure completely. And then, they write transformations that take petabytes of player data and boil it down to the features we need to build models.

This is a much more hands-on approach than Scientific Revenue took. It requires us to have access to player data, and it requires us to work inside the customer data center (for compliance reasons). But, at the same time, it’s also a much higher value for the customer: we can provide accurate data quality assessments and write detailed bug descriptions. And the features we create are often useful in other contexts.

5. You Can’t Optimize from the Outside

The second lesson (above) was that game companies don’t want automation. This lesson is that automation is probably the wrong approach anyway. To understand why, remember that games change all the time in the modern gaming world. Mobile games change faster than desktop games. And desktop games change faster than console games. But most recent games have an updated cadence that would shock game designers from the 1990s.

If you’re building a revenue optimization system, you have to know about changes made to the game. Significant game changes affect player behavior enormously (that is the point, after all, of changing the game). And that means an entirely automated process that dynamically tunes pricing (which was the Scientific Revenue vision) is probably not the best approach. Instead, working collaboratively with game and publishing teams to update integrations, take advantage of new data, and invalidate models proactively is a better approach for most games.

In summary, Game Data Pros is a consulting firm specializing in optimizing games and digital entertainment. Our focus lies in providing software and analytical tools to aid game companies in making informed decisions to enhance their products. We measure improvements through standard metrics like retention, LTV, and acquisition cost, often concentrating on enhancing the player experience across multiple games.

But we’re building on the things we’ve done before. One of the crucial learning experiences was at Scientific Revenue, where we built an automated, machine-learning-based dynamic pricing solution for mobile games. That experience, and the knowledge gained, have helped to inform how Game Data Pros thinks about optimization.